<a href="https://assetsio.gnwcdn.com/discordageverification.png?width=1200&height=630&fit=crop&enable=upscale&auto=webp" target="_blank" rel="noopener">Image Source</a>

We’ve all been there. You boot up your PC or fire up the PS5 to join the squad, only to be met with yet another update and that weirdly smug “empathy banana” loading screen. It’s the classic love-hate relationship—a chaotic blur of voice channels, constant pings, and the occasional security scare. But according to the latest reports from Rock Paper Shotgun, Discord is about to get a lot more personal. And frankly, a little more intrusive. They’re rolling out a mandatory age verification system globally, and it’s a lot more than just a “check this box if you’re 18” situation.

Discord is tired of guessing how old you are—so it’s building an AI to find out

This isn’t just about entering your birthday anymore. We’re looking at a multi-layered gauntlet: facial age estimation, government ID uploads, and the most controversial piece of the puzzle—a background “age inference” model. Basically, Discord is using AI to watch how you behave to decide if you’re actually an adult or just a kid pretending to be one. It’s a massive shift for a platform that used to feel like the digital version of a private basement hangout. Now? It feels like the bouncer is following you to your table.

I get the frustration, I really do. Nobody wants more friction when they’re just trying to check Elden Ring DLC news or hop into a Call of Duty match. But we have to face facts: Discord isn’t that niche chat app for gamers anymore. A 2023 Statista report puts them at over 196 million monthly active users. When you hit that kind of scale, the “Wild West” era of internet anonymity usually comes to a crashing halt—especially when safety enters the conversation.

The pivot to a “teen-by-default” setting isn’t just corporate busywork; it’s a response to some genuinely harrowing reality. We often treat Discord as a simple utility, but for some, it’s been a hunting ground. In June 2023, NBC News reported 35 cases of adults being charged with kidnapping or sexual assault involving the platform, along with 165 cases of prosecution for sharing exploitative material. Then there was the investigation into the 764 group, which showed how the app was used to coordinate horrific abuse across borders.

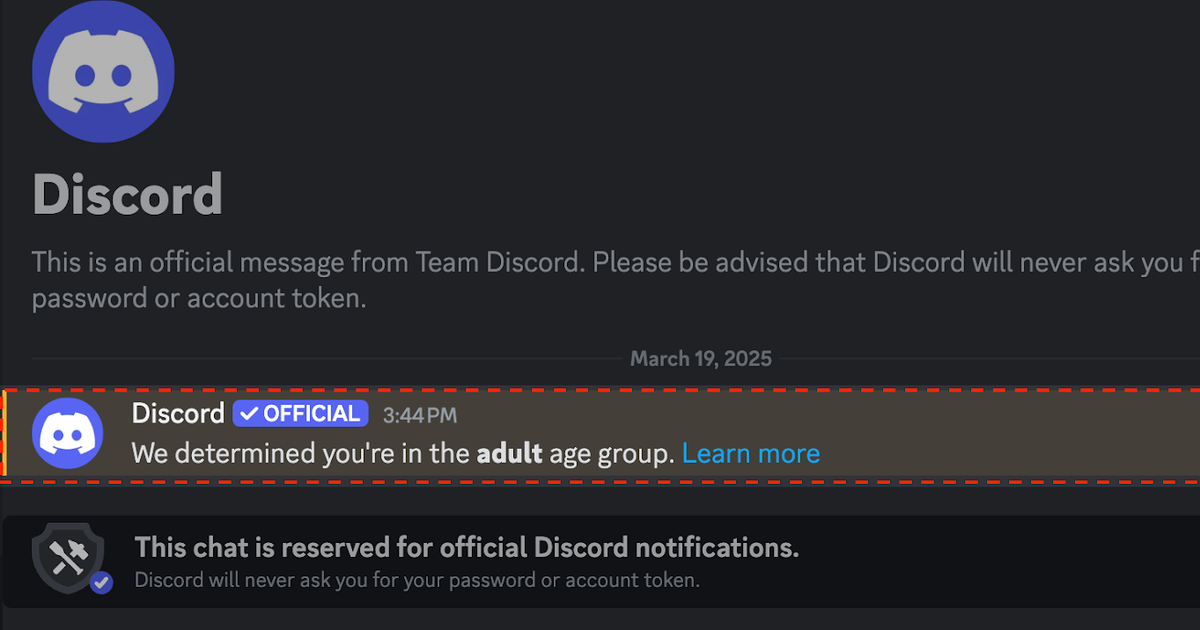

“The platform prompts users to age-assure only within Discord and currently does not send emails or text messages about its age assurance process or results.”

— Discord Official Security Update

When you see those numbers, the annoyance of verifying your age starts to feel a bit trivial. Discord is under an intense microscope right now. They aren’t alone, either—we’ve seen payment giants like Visa and Mastercard cracking down on adult content on Steam and Itch.io. The entire industry is being forced to grow up, and that means proving exactly who is on the other side of the screen. Starting this month, if you want to see “sensitive content” or join age-restricted servers, you have to prove your age. If you don’t? Discord just assumes you’re a minor. It’s a “guilty until proven grown-up” approach that’s going to annoy long-time users, but from a liability standpoint, they don’t have much of a choice.

The “vibe check” algorithm and the end of the anonymous gamer

This is where things get a bit Black Mirror. While you can opt for an ID upload or a facial scan, Discord is also running that “age inference model” in the background. They haven’t been exactly transparent about the data points this AI is munching on. Is it looking at the servers you join? The way you type? If you spend ten hours a day in Roblox, does the AI flag you as a kid? The idea of an algorithm “guessing” your age based on your digital footprint is, let’s be honest, pretty creepy.

It’s one thing to show a passport to a vendor; it’s another to have a silent auditor watching your moves to see if you “act” like a 30-year-old. According to a 2022 Pew Research Center study, about 95% of teens have access to a smartphone, making them the most tech-savvy demographic on these apps. They know how to bypass basic blocks, so Discord’s answer is to build a machine they can’t outsmart with a fake birth year. But what happens when the AI gets it wrong? Anyone who’s tried to appeal an automated ban on Xbox or Switch knows that talking to an algorithm is a Kafkaesque nightmare. We’re moving into an era where our digital rights are determined by pattern recognition, and that’s a bell you can’t unring.

To try and soften the blow, Discord is recruiting a “Teen Council”—a group of 10 to 12 teenagers to help the company understand what kids actually need. On paper, it sounds like a nice way to give them a seat at the table. In practice? It feels like a corporate focus group meant to put a friendly face on new surveillance measures. If I were on that council, I’d be asking about that background inference. Teens use Discord to escape the prying eyes of adults. By “inferring” their identity, Discord is essentially becoming the digital parent they were trying to avoid.

The reality is that Discord is trying to solve a human problem with a technical fix. Predators don’t always “act” like adults, and kids don’t always “act” like children. An AI might catch the obvious stuff, but the most dangerous users are usually the ones who know how to blend in. Is a background model really going to stop a bad actor, or is it just going to annoy the average person who just wants to talk about their Warframe build?

Will other users be able to see my actual age?

No, Discord says your specific age stays private. Other people will only see if you’re in the “adult” or “teen” category based on what channels you can actually access.

What happens if I refuse to verify my age?

Your account will probably default to the “teen” setting. That means you’ll be locked out of NSFW/age-restricted servers and your DM capabilities with strangers will be pretty limited.

At the end of the day, we’re seeing the growing pains of a platform that simply got too big for its own good. Discord isn’t just for “gamers” anymore; it’s a fundamental piece of the internet’s infrastructure. And like any infrastructure, it eventually gets regulated and guarded. I’ll definitely miss the old, chaotic days, but if this keeps even one more kid safe from the darker corners of the web, I guess I can live with an AI judging my “vibe” in the background. Just please, don’t make me look at that empathy banana again.

This article is sourced from various news outlets. Analysis and presentation represent our editorial perspective.